Good Catch! Quality control when working with AI

Over the last few months, I have been working intensively with Claude Code, ChatGPT, and other AI tools. It has been a wild learning experience, and at times an emotional roller coaster. Will my skills become redundant? Can anyone do research now? How do I actually use these tools well?

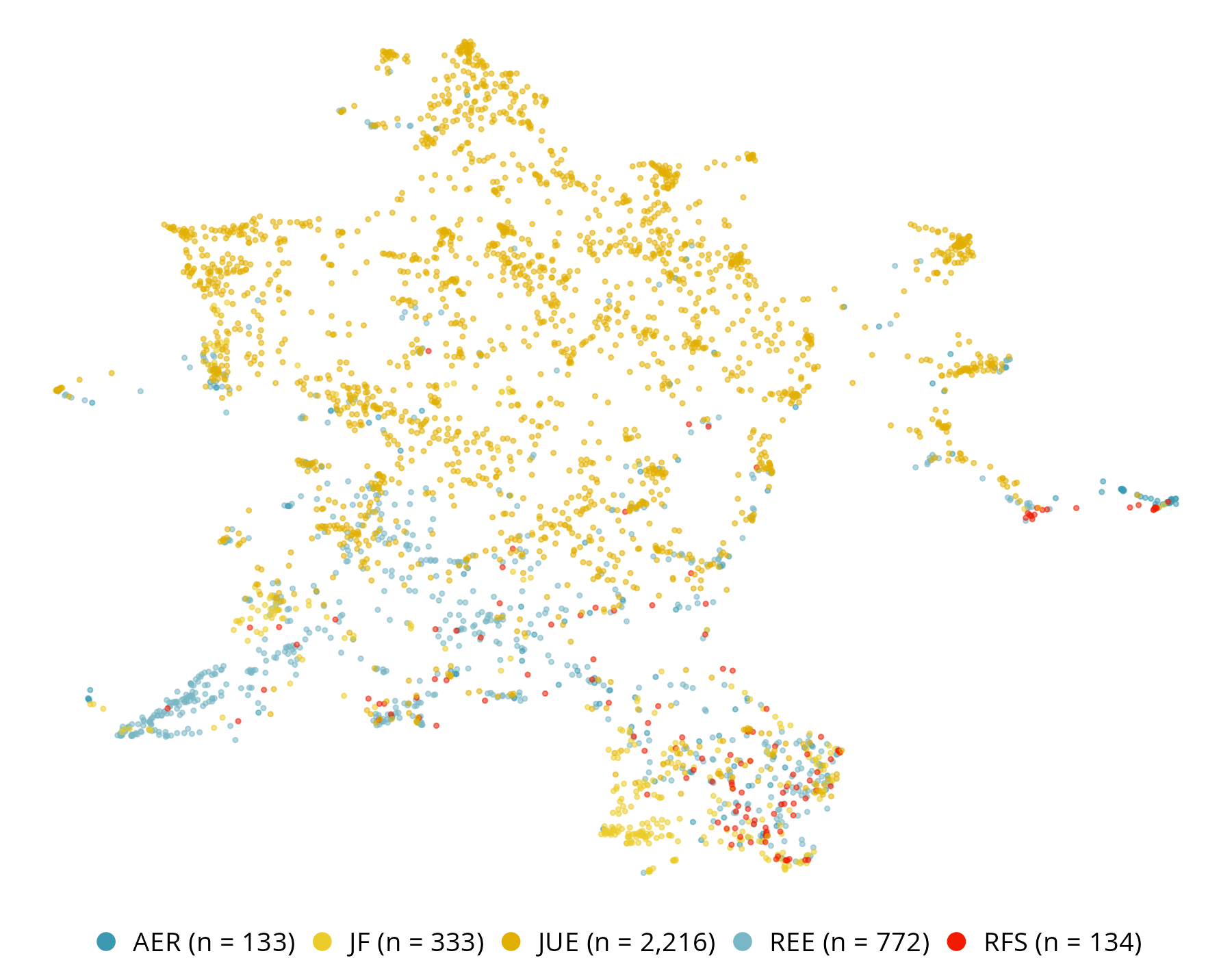

The way I “do stuff” has changed tremendously. The long nights of getting the data ducks in a neat row are disappearing. Visualisations and interactive data explorations are so much easier now. I love those interactive HTML slides that let me quickly zoom into spatial estimation output, much faster than importing everything into GIS.

But the longer I work this way, the more I realise that the way I “think about stuff” has not fundamentally changed. A lot of the real work still happens away from the keyboard: while cycling into work, doing the dishes, sitting in a meeting, or mentally turning over a research step. Running empirical tests requires as many critical checks as before. We still need to think carefully about data characteristics and limitations, the data generation process, measurement, context, theory, and links to other sources.

Just taking the output from Claude Code, however smooth it looks, is a recipe for disaster. And that will not change, even with better models. Quality control is as important as ever.

To ensure quality, a researcher needs to know their empirics, the literature, the theory, and how real-world data were generated in the real world. AI promises the automation of many steps in the research process. But this does not mean an erosion of true research skill. On the contrary. The better the tools become, the more important it is to identify mistakes and to earn another “Good catch!” response from Claude Code. Maybe we are not redundant (yet).